VidiNet Cognitive Services – AWS Speech to Text

There are many reasons to transcribe your spoken content in your media. The first reason that comes to mind is, of course, subtitling. Not only in the natively spoken language but also in translated versions. According to multiple research, subtitled videos improve reach, CTA, reactions, and share rates significantly. The second reason is, of course, to help you find the content you are looking for – do you remember the soundbite that the CEO made in that speech – but where is it?

From a business perspective, it also essential to understand how Search Engine Optimization (SEO) is affected by subtitling. Video in itself is obviously not text-based, so any information that informs Google what the video content describes benefits the ranking of the video. Subtitling your video to not just one language but many, therefore, could improve your SEO and visibility. Makes sense?

These are just some of the benefits of making subtitling in preferably more than one language available for your content.

However, for some of you, there are also new regulations to consider. An E.U. directive 2016/2102/EU now states that all member states must include subtitling on all official video information to comply with the U.N. Convention on the Rights of Persons with Disabilities (CRPD). This includes video information from government, schools, and other official organizations, including private companies that delivers information for public viewing.

Similar regulations have been present in the U.S. for many years. The most recent regulation, The 21st Century Communications, and Video Accessibility Act of 2010, states the presence of closed captions on material produced and distributed in the U.S. and can be accessed in the U.S.

Transcribe your content - but how?

Traditionally, transcribing speech to text has been a human task only. With the introduction of the new machine learning algorithms, this is now changing, and we can see how machines and humans can interact and cooperate in this area. Machine learning transcribing software proves more and more accurate, and with today’s score at around 80 % or higher depending on the quality of material, the software-based services can offload a lot of initial work that would typically be done by humans only.

So, instead of spending 8 hours on manually transcribing a 1-hour video, you will be able to improve your subtitling distribution workflow by offloading the first 80 % of work to a cognitive automatic subtitling algorithm such as the VCS (VidiNet Cognitive Services) in VidiNet.

With the introduction of VCS, we now take VidiCore API and VidiNet to the next level. The VidiNet Cognitive Services is a core architecture designed to manage cognitive services from a growing number of providers on the market. In this first release of VCS, you will find cognitive services based on the AWS Transcribe libraries.

Vidinet and AWS Speech to Text – a short introduction.

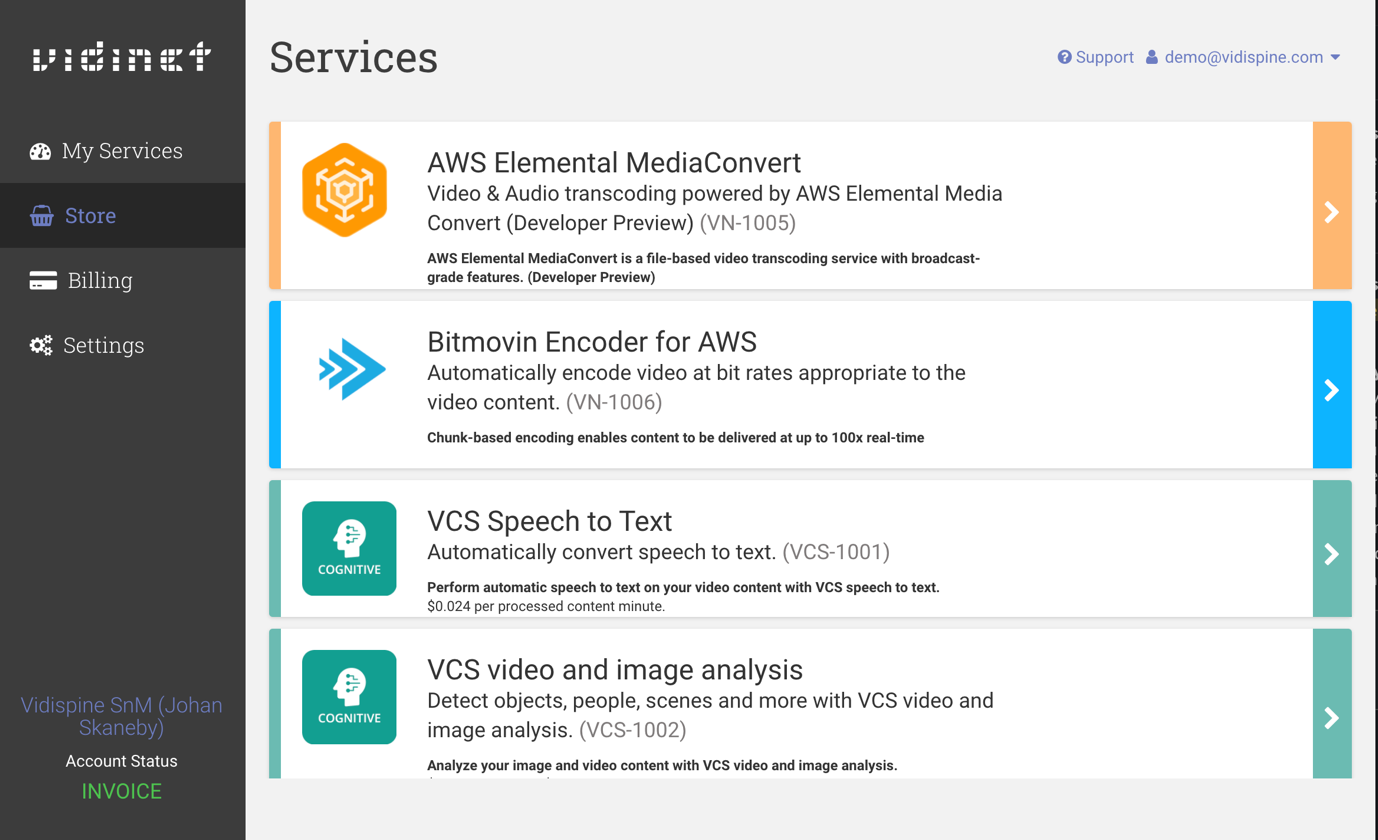

VidiNet is our media supply chain platform where Vidispine customers add and configure different services for their on-premise, cloud, or hybrid environment. In here, you can now access VCS Speech to Text and add this service to your infrastructure – or just your trial account.

Let´s take a quick look at a UI and how you can test the VCS Speech to Text functionality.

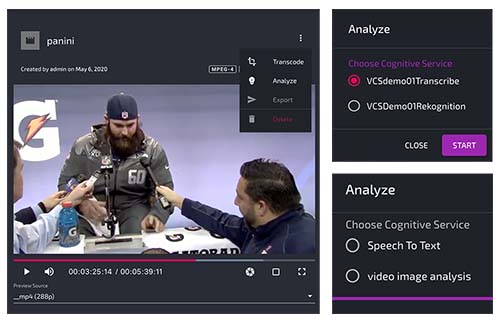

After uploading your content, choose “Analyze” to enable the AWS transcription service for your video. VidiNet will provide you with a cost estimate for the service as a basis for your calculations.

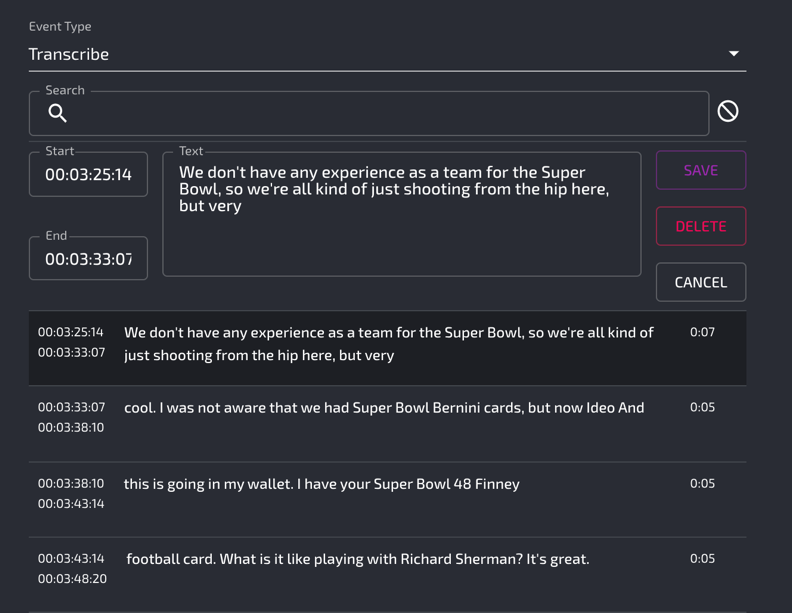

When the analysis and transcription have finished, you can easily search and navigate for the results.

The Vidispine UI

It is essential to understand that our VidiCore Development Toolkit (VDT) allows you to design any user interface (UI) that works for your environment. In these examples, we have provided a UI that provides basic functionality for testing the VidiCore API. As you can see, the VCS Speech to Text service provides you with not only a transcription and time-code but also a simple interface for manual adjustment of the auto-generated text.

The VidiCore Development Toolkit (VDT) is free and includes multiple packages

- Low-level javascript SDK for front/backend

- React wrappers

- Prebuilt components using https://material-ui.com/ (react components using Google's material design CSS)

With this brief introduction to the VCS Speech to Text service in VidiNet, it is time for you to test this service for yourself. Remember that the functionality and accuracy of machine learning also algorithms improve over time.

If you are using a transcription service or are working manually with speech to text today, you will most likely benefit from VCS Speech to Text in VidiNet.

Amazon Transcribe Pricing – how much?

When you try out the VCS Speech to Text, you will get an automatic cost estimate based on the amazon transcribe pricing and the source duration for the job you are starting. Use this estimate as a basis for calculating the price for the automation of speech to text in your media supply chain.

Currently, we charge 0,024 USD per content minute, but remember that you only pay when you use the service. You will scale up or pause your media supply chain whenever your business model requires it.

This flexibility is just one of many advantages when building your media supply chain with Vidispine.

Written by